// MIT study found the technology struggled to ID women with darker skin tones

// Amazon has been marketing the technology to police departments

// The tech giant denies the validity of the research

For all the latest retail technology news, make sure to visit Retail Gazette’s new publication chaRGed.com launching in the coming weeks.

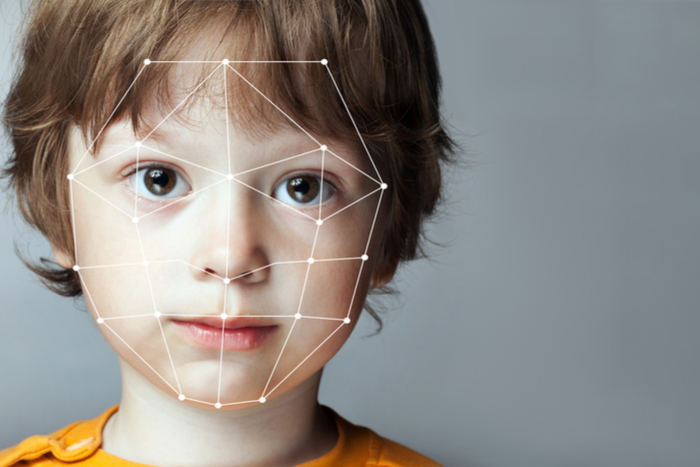

Amazon’s recently-developed facial recognition technology could be biased against women of colour, according to a new study.

Last week a new study carried out by the MIT Media Lab’s Joy Boulamwini and the University of Toronto’s Doborah Raji into Amazon’s Rekognition technology found that error rates shot up significantly when attempting to identify women with darker skin tones.

The retail giant’s technology, which it has recently been marketing to police departments, was found to be highly accurate when identifying the gender of males.

However, when attempting to identify the gender of women with darker skin tones, the error rate rose to 31 per cent.

“In light of this research, it is irresponsible for the company to continue selling this technology to law enforcement or government agencies,” Buolamwini, who has conducted similar research into Microsoft and IBM’s facial recognition technology, wrote.

“If you sell one system that has been shown to have bias on human faces, it is doubtful your other face-based products are also completely bias free.”

The studies into both Microsoft and IBM’s technology found that they both similarly struggled to accurately identify darker-skinned women, but both companies have since updated their technology to reduce the rate of error.

Amazon has dismissed the validity of the study, with its general manager of artificial intelligence at Amazon Web Services Matt Wood stating that the study focused on facial analysis rather than facial recognition.

Facial analysis seeks to identify features like gender, whether the person is wearing glasses or whether the person might have facial hair, while facial recognition focuses on finding matching photos of the same face.

“It’s not possible to draw a conclusion on the accuracy of facial recognition for any use case —including law enforcement— based on results obtained using facial analysis,” Wood said.

“The results in the paper also do not use the latest version of Rekognition and do not represent how a customer would use the service today.”

Click here to sign up to Retail Gazette‘s free daily email newsletter